Posts

Local LLMs as a Code Assistant in Visual Studio Code

I have been using a Visual Studio Code extension called Continue, and it has become one of my favorite ways to work with local LLMs. The short version: Continue lets you bring AI-assisted coding into VS Code while …

In this article

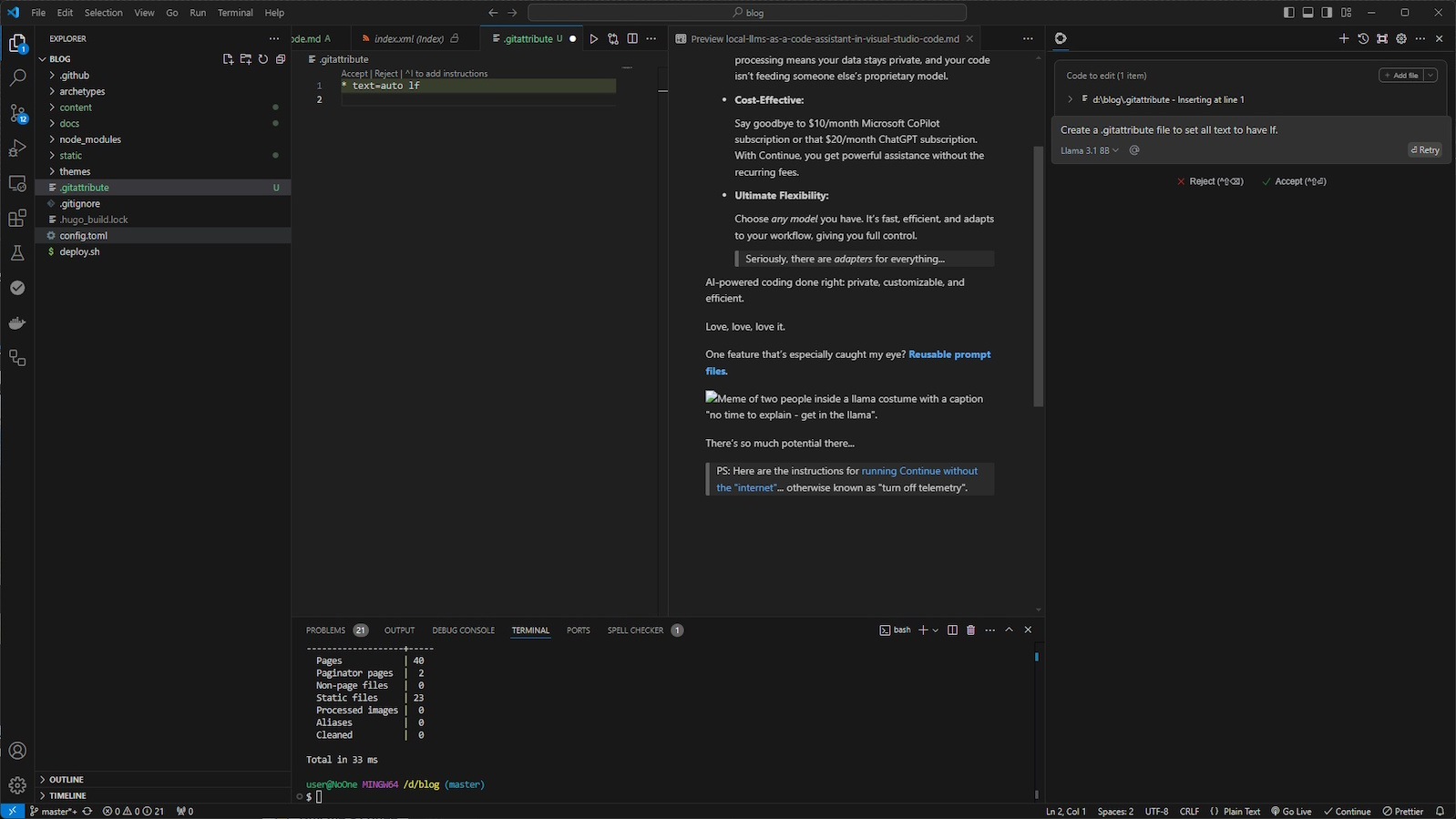

I have been using a Visual Studio Code extension called Continue, and it has become one of my favorite ways to work with local LLMs.

The short version:

Continue lets you bring AI-assisted coding into VS Code while keeping the model, prompts, and workflow under your control.

That matters more than it sounds like it does.

Why Local Matters

Local models are not always the strongest models.

They are not always the fastest models.

But they give you something that hosted tools usually do not: control over the boundary.

With Continue, you can connect to tools like Ollama or LM Studio and keep the work on your machine. That makes it useful when you are experimenting with code, notes, rough ideas, or anything you do not want casually shipped off to a hosted service.

That does not magically solve every privacy concern, but it gives you a much better starting point.

Why Continue Fits the Workflow

Continue is useful because it sits where the work already happens: the editor.

That means you can:

- ask questions about selected code

- generate or revise snippets

- use local models for quick review passes

- keep reusable prompt files near the project

- swap models depending on the task

The reusable prompt files are the feature that caught my attention.

If you have a repeatable workflow, you can write the prompt once, refine it, and keep reusing it. That is a small habit with a big payoff. The prompt stops being a disposable chat message and starts becoming part of the project tooling.

Where It Helps

I mostly like this setup for contained tasks:

- explaining unfamiliar code

- drafting tests

- reviewing a small diff

- generating shell commands

- refactoring a narrow function

- summarizing local notes

That is where local models feel practical. I do not need every interaction to be frontier-model brilliant. Sometimes I need a local assistant that can help me think through the next step without turning the workflow into a procurement decision.

The Tradeoff

The tradeoff is that local models require more patience and a little more setup.

You need to choose a model. You need to understand its limits. You need to decide when a local answer is good enough and when the task deserves a stronger hosted model.

That is not a downside to me.

That is the work.

Using AI well means understanding the shape of the tool, not pretending every model is interchangeable.

The Bottom Line

Continue gives me a private, configurable code assistant inside VS Code.

It is not magic. It is not a replacement for judgment. It is a useful way to make local models part of the daily development loop.

And that is the part I keep coming back to: local AI does not have to be the biggest hammer in the toolbox to be worth using.

Sometimes it just needs to be close by, predictable, and pointed at the right task.

-Rob