Posts

Agentic Development and the Review-Centric Workflow

AI is going to change software teams whether we pretend to be ready or not. The lazy version of that change is simple: give everyone an agent, tell them to go faster, and hope review catches whatever falls out the other …

In this article

AI is going to change software teams whether we pretend to be ready or not.

The lazy version of that change is simple: give everyone an agent, tell them to go faster, and hope review catches whatever falls out the other side.

That is not a strategy.

The better version is this:

Move humans closer to design and review, then let agents help execute well-defined units of work.

That does not mean developers stop being technical. It means the team’s technical energy shifts toward architecture, decomposition, design patterns, refactoring, review, verification, and shared understanding.

In other words: less isolated AI-assisted output, more shared design, careful decomposition, parallel execution, and team review.

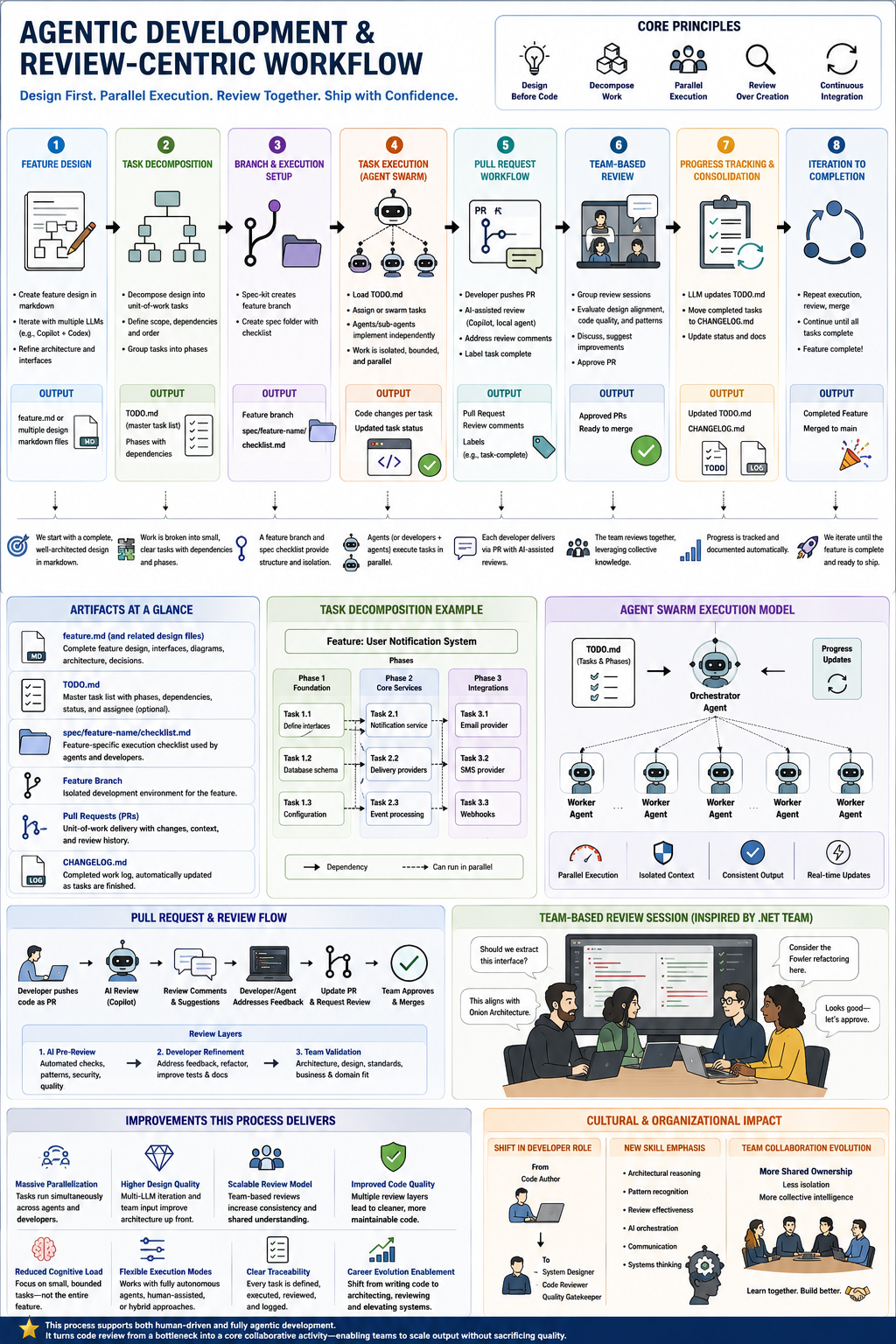

This infographic is the workflow I have in mind:

The Point of the Workflow

The point is not to remove humans from software development.

The point is to put humans in the loop where human judgment matters most.

AI can generate code quickly. Sometimes very quickly.

But speed is not the same thing as design quality.

Less experienced developers can now produce code that looks plausible before they understand why it should exist, how it should be shaped, what it depends on, how it will be maintained, or where it will fail.

That is the danger zone.

Not because junior developers are bad. They are not. They are learning.

The problem is that AI can let someone skip the painful middle steps where judgment normally gets built:

- reading unfamiliar code

- understanding boundaries

- seeing repeated failure patterns

- learning when a design is too clever

- learning when “just add a helper” quietly becomes technical debt

- defending a change in review

- revising work after someone points out the hidden cost

Those steps are not bureaucracy. They are how engineering taste gets formed.

So the workflow has to protect that learning loop.

Design First

The first column in the graphic is Feature Design.

That is intentional.

The team starts with a complete design in markdown. Not a 90-page architecture document. Not ceremony for the sake of ceremony. A useful design.

The design should capture:

- the problem being solved

- the intended behavior

- the interfaces involved

- expected user or system flows

- constraints

- architectural decisions

- tradeoffs

- known risks

- what is explicitly out of scope

This is where tools like ChatGPT, Copilot, Codex, Claude, or whatever agent stack you are using can help. Have them critique the design. Have them find edge cases. Have them ask annoying questions.

Annoying questions are underrated. Half of architecture is being irritated early enough that production does not get a vote later.

The output of this phase is a feature.md file or a small set of related design files.

That document becomes the shared source of intent.

Decompose The Work

The second phase is Task Decomposition.

This is where the design becomes a queue of small, ordered units of work.

The infographic calls out a shared TODO.md, and I like that because it is boring in the best possible way.

Something like this:

# TODO

## Phase 1: Foundation

- [ ] Task 1.1: Define interfaces

- Depends on: none

- Output: interface definitions and tests

- [ ] Task 1.2: Database schema

- Depends on: Task 1.1

- Output: migration and model updates

## Phase 2: Core Services

- [ ] Task 2.1: Notification service

- Depends on: Task 1.1, Task 1.2

- Output: service implementation and tests

The point is not that TODO.md is magic.

The point is that the team agrees on the work before agents start editing code against different assumptions.

Each task should have:

- a clear scope

- dependencies

- expected files or modules

- acceptance criteria

- test expectations

- ownership

- review notes or design constraints

This is where experienced developers can raise the floor for everyone else.

They can point out bad task boundaries. They can catch sequencing problems. They can say, “This task is too big,” or “This abstraction does not deserve to exist yet,” or “We need the contract before the implementation.”

That is mentorship with operational value.

Branch and Execution Setup

The third phase is Branch and Execution Setup.

The graphic shows a feature branch, a spec folder, and a checklist.

That matters because agentic development needs isolation.

You want a place where the team can execute aggressively without making main absorb every experiment.

A practical structure might look like this:

feature branch

+-- feature.md

+-- TODO.md

+-- spec/feature-name/

+-- checklist.md

+-- decisions.md

+-- review-notes.md

This gives both humans and agents a stable operating surface.

The agent is not being asked to “make the app better.”

That request is too vague to be useful.

It is being asked to complete a specific task, against a specific design, with a specific checklist.

That is a very different thing.

Developers Become Local Orchestrators

The fourth phase is Task Execution, and this is where the role shift gets interesting.

The developer is still responsible for the work.

But instead of personally typing every line, the developer locally orchestrates agents to complete the assigned task.

That might mean:

- loading

TODO.md - selecting an assigned task

- giving the agent the relevant design docs

- asking for an implementation plan

- reviewing the plan before code changes

- letting the agent edit

- running tests

- asking another agent to review the diff

- refining the result

- committing the change

This is not passive.

This is not “the bot did it.”

The developer owns the task. The agent is a tool. A very fast, very useful, very occasionally weird tool.

The workflow works best when task ownership is clear:

Task 2.1 assigned to Rob

+-- Rob orchestrates local agents

+-- Rob verifies the implementation

+-- Rob opens the PR

+-- Rob responds to review

+-- Rob updates task status

That accountability is important.

Without it, agentic work becomes hard to evaluate. Lots of movement, low traceability, and no clear owner for the result.

Pull Requests Become the Unit of Review

The fifth phase is Pull Request Workflow.

Each completed unit of work becomes a PR.

The PR should include:

- the task ID or checklist item

- what changed

- what agent assistance was used, if relevant

- what tests were run

- what risks remain

- what needs human attention

AI-assisted review can happen here too.

Have an agent review the PR locally. Have it look for missed tests, bad abstractions, inconsistent naming, or places where the implementation drifted from the design.

But do not confuse AI review with team review.

AI can help prepare the conversation.

It should not replace the conversation.

Review Together

The sixth phase is the heart of the whole thing: Team-Based Review.

This is where I think a lot of AI adoption strategies are getting it wrong.

They treat review as a speed bump.

It is not.

Review is where the team learns.

A group review session lets everyone see:

- why the design changed

- where the implementation diverged

- which patterns are becoming standard

- which shortcuts are starting to smell

- which developers need more support

- which agents are producing useful work

- which tasks were under-specified

This is also where less experienced developers level up.

They get to hear senior engineers reason out loud.

Not just “change this line.”

The useful stuff:

- “This belongs behind an interface because we already have two providers.”

- “This should stay inline because abstracting it now hides the behavior.”

- “This violates the existing error-handling pattern.”

- “This test proves the happy path but misses the failure mode.”

- “This is technically correct and still too hard to maintain.”

That is the part AI cannot replace cleanly.

It can generate suggestions. It can summarize diffs. It can find suspicious code.

But the shared discussion is where the team builds taste.

Consolidate Progress

The seventh phase is Progress Tracking and Consolidation.

After review, the team updates TODO.md, moves completed tasks into CHANGELOG.md, and keeps the state of the feature visible.

This sounds small.

It is not small.

Shared state is what keeps parallel work from becoming parallel confusion.

The infographic makes this explicit:

- update

TODO.md - move completed tasks to

CHANGELOG.md - update status and docs

That gives the next developer, the next agent, and the next reviewer a current map.

No archaeology required.

The goal is simple: nobody should have to reconstruct the feature history from scattered PR comments when the task queue could have told the story directly.

Iterate Until the Feature Is Complete

The eighth phase is Iteration to Completion.

You repeat the loop:

- Execute assigned tasks.

- Open PRs.

- Review together.

- Consolidate progress.

- Merge into the feature branch.

- Continue until the feature is complete.

The final merge to main should feel almost boring.

That is the goal.

By then, the team has already reviewed the design, watched the implementation evolve, corrected drift, updated the task queue, and kept the feature branch coherent.

Shipping should feel like completing a known sequence, not discovering the feature all over again at merge time.

Why This Upskills Instead of Replaces

The uncomfortable truth is that AI can make weaker developers look stronger for a short period of time.

It can also make strong developers lazy if they stop reading, stop reviewing, stop designing, and stop asking why.

That is the atrophy risk.

If AI does more of the implementation work, humans need to do more of the thinking work around implementation:

- architecture

- design boundaries

- naming

- refactoring

- test strategy

- review quality

- dependency management

- operational risk

- maintainability

This workflow keeps those muscles active.

It gives less experienced developers a ladder:

- They see the design conversation.

- They receive smaller tasks with clearer boundaries.

- They learn how to prompt and constrain agents.

- They open PRs with better context.

- They participate in group review.

- They hear how senior people evaluate tradeoffs.

- They build judgment instead of only building output.

That matters for employability.

Not in a vague motivational-poster way. In a very practical way.

If AI reduces the market value of “I can produce code,” then developers need to increase the value of “I can design, evaluate, maintain, and improve systems.”

That is the career move.

The Human Loop Is the Quality Loop

The core principles in the infographic are doing a lot of work:

- Design Before Code

- Decompose Work

- Parallel Execution

- Review Over Creation

- Continuous Integration

That is a useful philosophy for agentic teams.

Design before code keeps the team aligned.

Decomposition makes the work small enough to assign, review, and recover.

Parallel execution lets agents and humans move quickly without stepping on each other constantly.

Review over creation puts human judgment where it has leverage.

Continuous integration keeps the feature branch close enough to reality that review feedback still matters.

The model is not “AI replaces the team.”

The model is “AI increases the team’s execution bandwidth, so the team must increase its design and review discipline.”

That is the trade.

If you take the bandwidth and skip the discipline, you get faster entropy.

More output does not automatically mean more progress.

A Practical Starting Point

If I were introducing this on a real team, I would start small.

One feature. One feature branch. One shared TODO.md.

The minimum viable version:

- Hold a group design session.

- Write

feature.md. - Break it into task phases in

TODO.md. - Assign each task to a developer.

- Let each developer orchestrate agents locally.

- Require a PR per task.

- Review PRs together twice a week.

- Update

TODO.mdandCHANGELOG.mdafter each merge. - Merge the feature branch when the task queue is done.

Do that once before trying to build a giant enterprise AI transformation program with a logo, a steering committee, and six dashboards nobody trusts.

Learn the workflow with a real feature.

Then refine it.

The Bottom Line

Agentic software development should not be an excuse to hollow out engineering teams.

It should be a chance to make teams better.

The path I trust is design first, decomposition second, agent-assisted execution third, and collective review all the way through.

That structure lets teams move faster without surrendering the hard-earned parts of software engineering: judgment, architecture, maintainability, mentorship, and shared ownership.

AI can help us produce more code.

The real question is whether we can become better stewards of the code we produce.

That is where the humans still matter.

-Rob